If you could pick one area of improvement for camera tech what would it be?

When you think of advancing camera tech, what are the camera facets that first come to mind? I’m willing to bet near the top of near everyone’s list would be better high ISO performance, perhaps higher resolution, size perhaps, and maybe a fast electronic shutter or high flash sync speeds. It’s the better ISO performance that seems to garner much of the attention, and I would say while important, what I’d like, and what I think we’re really going to see be the next big area of focus and development, is in dynamic range.

There are many reasons for this which I could get into at a later day, but obviously a large part of its importance is the given ability to recover data from a file, especially in high contrast situations. In that very vein, a research team at MIT has developed a new camera technology that aims to make sure any image is never overexposed. The boffins at MIT aimed and haven’t fallen far from the mark. As a first demonstrative iteration, it works.

It was presented in a work at the International Conference on Computational Photography earlier this year, and the new tech showed the tech that would allow camera sensors to draw large amounts of information from equally large amounts of light, without being overblown.

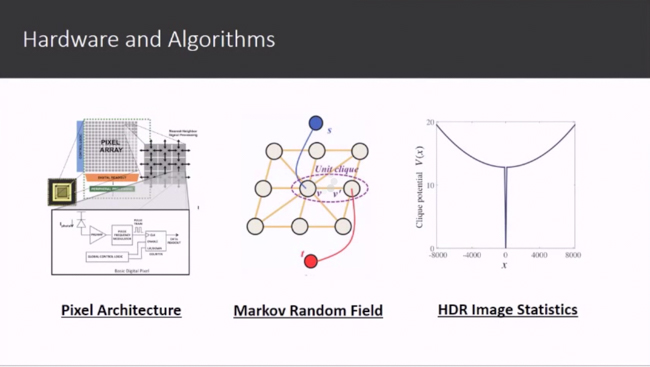

A smart tradeoff in taking ultra high dynamic range data with a limited bit depth is to wrap the data in a periodical manner. This creates a sensor that never saturates: whenever the pixel value gets to its maximum capacity during photon collection, the saturated pixel counter is reset to zero at once,

and following photons will cause another round of pixel value increase.This rollover in intensity is a close analogy to phase wrapping in optics, so we borrow the words “(un)wrap” from optics to describe the similar process in the intensity domain. Based on this principle, a modulo camera could be designed to record modulus images that theoretically have an Unbounded High Dynamic Range (UHDR).

Now, I’m a bit thick sometimes (I didn’t go to MIT), but what this means at a simplistic level is that the sensors’ pixel sensitivity gets reset and refilled when the max exposure has been achieved – acting as a sort of HDR on an individual pixel level.

[REWIND: NIKON D750 REVIEW | IT’S ACHILLES, LESS HIS HEEL]

Apparently, this is known as a ‘modulo’ camera; a name that takes its origins from modular arithmetic that is rooted in resetting numbers. It’s all quite brilliant if you ask me, though sadly probably not weeks, or months but years before it’s in a consumer camera. But it’s a good step, and one in the right direction. Sure, creatively many of us have come to love being able to expose as we ‘wish,’ but sometimes I just wish things were exposed overall correctly…

You can see the full presentation paper here, and see a short video of how it all sort of works below.

If you could pick one area of improvement for camera tech, again, what would it be?

Source: Imaging Resource, MIT Media Lab

Get Connected!